For years, KIT SC eSports faced a classic organizational nightmare: knowledge fragmentation. How do we get the right information to the right people exactly when they need it?

Our “data stack” was essentially a graveyard of good intentions. We had an ancient Facebook Group, hundreds of Google Drive folders, aged Dropbox shares, a self-hosted cloud, and a long-abandoned MediaWiki. Meeting protocols spanning a decade were scattered everywhere, burying decisions, arguments, and club history. Finding the “most recent” decision on a topic from 2021 was practically impossible.

Over the last year, my primary goal as Chairman was simple but massive:

It should be as easy and attractive as possible to take over responsibility in our club.

To do that, we needed to democratize our data.

The Base: Docmost & Discord integration

I envisioned a central hub that connected all the dots—easy to update, preserving historical context, and effortlessly accessible.

That’s when I found Docmost. It’s an open-source wiki with a Notion-like feel, Markdown support and native integrations. Best of all: It’s built on Nest.js and TypeScript. Since that’s my preferred stack, extending the software to our specific needs felt like a no-brainer to me.

The first hurdle was onboarding. We don’t manage standard “user accounts” or passwords for our members, but virtually everyone is on Discord. Using discord.js (an awesome library), I built an auth flow in a few hours. Members now log into the wiki via Discord, automatically inheriting the correct access permissions based on their server roles.

The Search: Making RAG Work for Us

A standard wiki is great for structured navigation, but club members don’t search like computers. They ask human questions:

- What did we decide last time on the budget for our Summer Event?

- What’s the current process for getting travel expenses reimbursed?

- Who played in the 2021 UEM League of Legends team?

This is where current LLM-based AI truly shines. Our Markdown-based documents were the perfect foundation for a semantic search using Retrieval-Augmented Generation (RAG) – and a ridiculously fun side project for a few evenings.

Chunking and Embedding

Leveraging Docmost’s event-driven architecture, it’s trivial to detect when a document changes. I wrote a mechanism to extract context-aware blocks of text, splitting documents based on heading levels and empty paragraphs. This aligns perfectly with the format of our meeting protocols.

Each block, sub-block, and individual sentence (meeting a minimum length) is then vectorized using a locally hosted Ollama BGE-M3 model.

This dual approach means both broad context and highly specific details are embedded into our vector store, which I integrated into the Docmost stack using pgvector. To optimize performance, each database row holds a hash of the content (preventing redundant embeddings during edits) alongside exact document references. All of this, using only a few lines of code with Vercels amazing AI SDK for Typescript.

The Scoring Algorithm

When a user asks a question, we run a similarity search against the vector store using L2 distance. But we don’t just take the top hit.

Matches are ranked and aggregated. Every chunk matched within a single document contributes to that document’s overall score, weighted using a decreasing harmonic scale. If a document has 10 matching chunks, the first match provides its full similarity score, the second provides 1/2, the third 1/3, and so on. This ensures that both the quality and frequency of matches dictate the final document ranking.

We also supplement this with a hybrid approach: passing AI-generated buzzwords into Docmost’s standard search engine to broaden the net of relevant documents. Finally, documents are injected with metadata (Creation Date, Author, Last Update), allowing the LLM to infer the most recent and relevant decisions, effectively overriding outdated discussions.

Lastly, the “most recent” meeting protocols are automatically added to the AIs context with every request. This way, most of the times our agent doesn’t even need to call a tool for further information.

The Data: Protecting Personal Information

While our vector embeddings run locally, the heavy lifting of answering questions is handled by Gemini via Google Vertex. Even though Google officially states that Vertex inputs aren’t used for model training, we wanted an extra layer of privacy.

Before any text hits the LLM, it goes through a custom anonymization pipeline.

Using our member database (which includes real names, (e-mail) addresses and Game Tags), the system extracts personal data from the retrieved documents. I initially experimented with Microsoft Presidio, but it didn’t quite hit the accuracy I needed. Instead, I built an anonymization engine that detects members across multiple documents and generates an “anonymization map.” Real names are swapped with random names from a placeholder database. This mapping state is preserved across tool calls, ensuring consistency.

The Glue: Tying it All Together

So, what happens when someone actually asks a question like, “What did Nico say about the summer event in the latest board meeting?” – Here is the pipeline:

- Semantic Query Tool: Vectorizes the exact question.

- Docmost Search Tool: Queries standard keywords like “Summer Event”, “Board Meeting”.

- Ranking & Metadata: Aggregates, scores, and enriches the retrieved documents.

- Anonymization: Masks personal data in both the documents and the prompt.

- LLM Context Injection: The data is compiled and sent to the LLM. The prompt looks something like this:

You are a knowledge manager of an esports organisation, tasked with extracting, enhancing, and summarizing specific information from a knowledge database about the esports club.

Your goal is to provide clear, concise, and accurate summaries that address the user's questions accurately.

[...]

Guidelines for your response are:

[...]

Those documents match the users question:

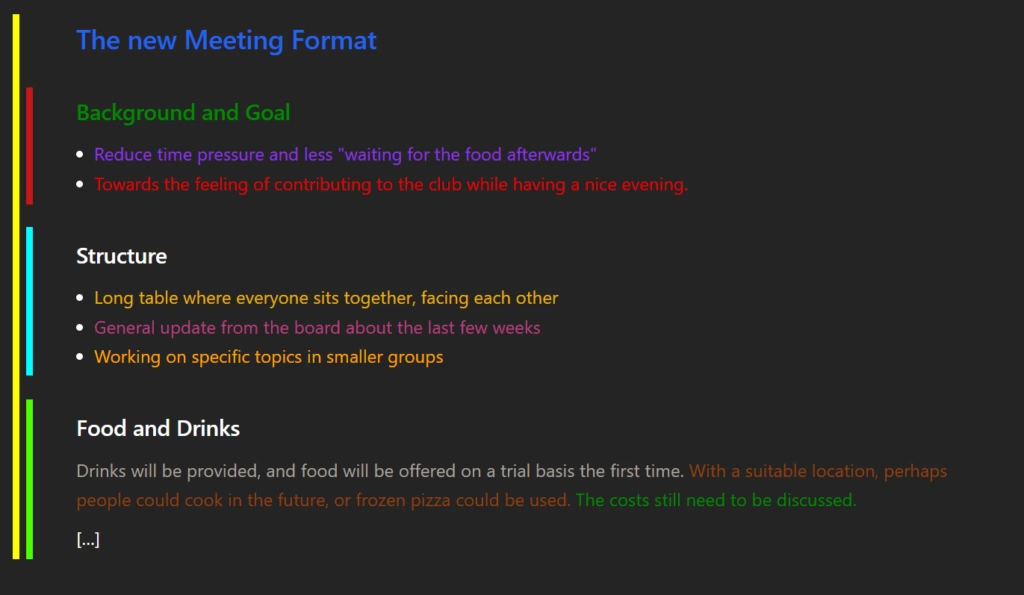

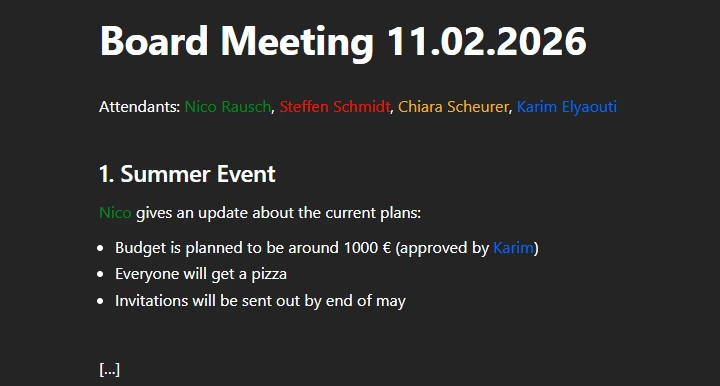

<document id="abc123" title="Board Meeting 11.02.2026" createdAt="2026-02-11" lastUpdate="2026-02-11">

Attendants: Michael Jackson, Bad Bunny, Taylor Swift, Bruno Mars

1. Summer Event

Michael Jackson gives an update about the current plans:

- Budget is planned to be around 1000 € (approval by Bruno Mars pending)

- Everyone will get a pizza

- Invitations will be sent out by end of may

</document>

[...]

With respect to your information, please answer the following question:

> What did Michael Jackson say about the summer event in the latest board meeting?

- Final Pass: Once the LLM returns an answer, we parse any document references (formatted strictly as

[Document Name](Document ID)) and run a de-anonymization pass to restore real names.

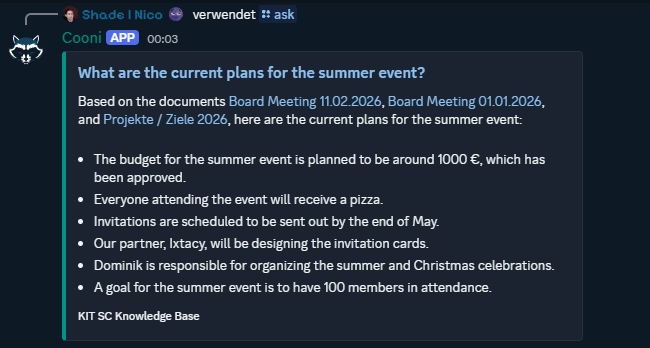

The final output to the above question would be something like this:

According to the document Board Meeting 12.02.2026, Nico said that the budget is planned to be around 1,000 €, everyone attending the event will get a pizza, and the invitations are planned to go out until the end of May. Also, in a previous meeting (Board Meeting 01.01.2026), it was discussed that the invitation cards will be designed by our partner Ixtacy.

The Interface: Meeting Users Where They Are

To make this immediately useful, we brought it straight to where our members already hang out: Discord.

I added a simple /ask slash command to our custom Discord bot, Cooni. The bot securely forwards the request to the wiki API, processes the RAG pipeline, and renders the response back in Discord-compatible Markdown. User roles are propagated throughout the entire flow, guaranteeing that members only receive answers based on documents they are authorized to read.

Now, everyone can seamlessly consume our club’s history and contribute to its future.

What Comes Next?

This setup took about three evenings to build late last year. It’s incredible how quickly a powerful open-source project – with just a few targeted extensions -can transform into the core infrastructure of our daily work. This project was also a great lesson in building AI features that actually solve real problems, rather than just slapping a “shiny AI button” onto an app.

Next up, we’ll be using this infrastructure (and a bit of AI help) to migrate all those legacy meeting protocols into the new Wiki automatically. We also want to give our bot a bit more personality as the mascot and heart of our club. Because who wants to chat with a boring bot? 🤖

As always, feel free to reach out with questions or feedback. I’d love to discuss!